This blog post explores the effects of transfer, synthetic media, and confirmation bias that breaks reality. The primary target audience are non-expert adults who are politically moderate or politically curious. They are not locked into a rigid framework and are likely to ask questions like “is this real?” and “How should I feel about this?”. These questions make them receptive to education. The chosen medium for this was my blog because there are no algorithms this piece needs to combat in order to gain reach. My piece allows the audience to slow down, read the argument, process the information, and decide how they want to move forth. Rather than a quick informational video, my piece allows my target audience the time and space to ingest the information that I’m providing them. They are not rushed into making educated decisions.

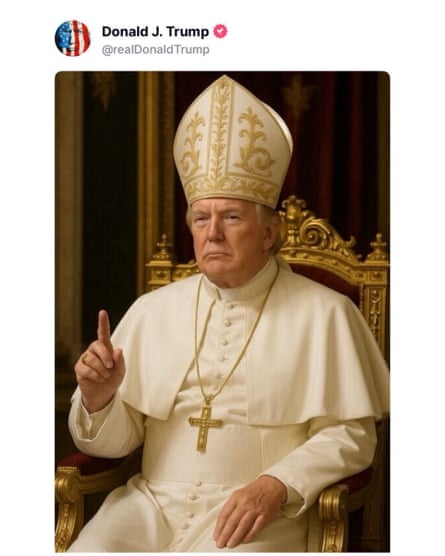

Earlier this month, President Donald Trump posted an image of him depicted as a god — He appeared to be healing an elderly man while a woman was praying over them and three other characters, two of them being a nurse and a military officer, are looking up at him. The background consists of: an American flag, angels coming from the sky, the Statue of Liberty is situated right behind them along with bald eagles soaring the sky.

Whether this image was posted by the president to “troll” those against his administration or to pander to his followers is almost irrelevant. As AI technology continues to improve and become photorealistic, we are slowly witnessing a new genre of political iconography: AI-generated religious apotheosis.

On its surface, the picture of Trump as Jesus Christ might seem like an online satirical image uploaded by the President. However, for an average viewer, it can function as a potent psychological weapon. To understand the dangers of AI-generated religious apotheosis of political figures, we need to look into three mechanisms: transfer, deepfake technology, and confirmation bias.

Transfer

The transfer (propaganda) technique is when you take the credibility, sanctity, or emotional weight of a symbol and attach it to a person or product. The AI-generated photo of the president is, in its purest form, the transfer technique. It’s not uncommon to see a political ad that shows a candidate standing by a church or helping those in need. The issue comes from the fact that generative AI depicts its image as a literal visual claim.

In the Untied States, 62% of Americans identify as Christians. The Christians who stumble upon content of political leaders superimposed onto the visage of Jesus Christ hijacks religious loyalty. The viewer subconsciously associates the holy figure and political figure as one. This phenomenon is not persuasion but rather neurological shorting. Researchers, Michael Klincewicz, Mark Alfano and Amir Ebrahimi Fard, call this idea ‘slopaganda’, which is the use of AI-generated content to manipulate sentiment and build emotional associations that bypass rational agreement. As documented, “through repetition, those associations stick, even when the viewer knows the content is fake” (Klincewicz, Alfando, and Fard, 2025). Content pushed out by political figures, like the president, allow them to portray themselves however they see fit without having to wait for the media to do so. They are able to pander to the ideologies that their supporters see them as, which plays into the idea of slopaganda. This article creates a great connection between the Rorschach test and the president’s followers — they believe that he could, and would, and is the kind of president who would do what these AI-generated videos and photos are depicting himself to be.

Deepfake

Deepfake is an artificial image or video generated by a special kind of machine learning called “deep” learning. Deepfake encompasses any synthetic media where a person is depicted doing something they never did. The photo of Trump as Jesus Christ is an example of a deepfake of divinity.

Years ago, deepfakes had glitchy portions of the picture and sometimes had garbled texts. Due to the advancement in AI technology, these models can produce 4k resolution images with perfect texture and lighting. Modern deepfakes are a lot more difficult to spot than in previous years — it’s reached a point where they have heartbeats now. “Modern text to image systems such as Stable Diffusion and DALL E can now generate images so realistic that they often appear completely natural, leaving little to no visible artifacts for traditional deepfake detectors to rely on” (Ameen, Islam, 2025). Authentic photos are susceptible to having synthetic photo copies. Issac Record and Boaz Miller argue that generative AI “allows ordinary computer users to create and widely share fictional worlds indistinguishable from the real world” (Record, Miller, 2025). The real slowly becomes dubious and the fake becomes gospel to all.

Confirmation Bias

Confirmation bias is defined as people’s tendency to process information by looking for, or interpreting, information that is consistent with their existing beliefs. As I mentioned above, the image is a perfect depiction of the Rorschach test. Depending on who you are and where your views align with, the AI image can feel like a prophecy for those who support the president. Dopamine can be released just by the sight of the visual because it matches the ideology that you align with — Trump as a persecuted savior. On the other hand, those who do not support the president are likely to see the image as a blasphemous horror. This does not erase the fact that Trump’s supporters’ can have the same feeling toward the image as those who do not support him. Former U.S. representative and Trump supporter Marjorie Taylor Green commented on the image with, “I completely denounce this and I’m praying against it!!!”.

The synthetic nature of the image is irrelevant. Neither side, Pro-Trump or Anti-Trump, are checking the image to see if it is real. Rather, they are using the photo to confirm their beliefs instead — this act is how AI accelerates polarization. AI isn’t creating the bias, it acts as a source to fuel the bias that has already generated from either side. It has been found that a voter’s political affiliation directly dictates how they process identical information. Partisans were “open and forgiving of an in-party politician’s transgression but critical and unforgiving of an out-party politician’s identical transgression” (Lee, Romdhane, 2025). In a study, it was found that simply telling someone a face belongs to a political ally or opponent changes the way the faces’ trustworthiness and characteristic is perceived by others (Cassidy, Hughes, Krendl, 2022). AI-generated images of political figures as divinity can be utilized as a weapon to exploit the vulnerability.

Although these images are lies, their harm runs deeper than just lies. These images damage three distinct domains: democracy, religion and reality. It harms democracy by allowing political figures to have a pass. When supporters genuinely draw a connection between their candidate and a divine being, it justifies undemocratic behavior by placing them above the laws. They are likely to support their candidates’ unlawful actions because they are of divine being. These images harm religion as it reduces the influence of Jesus Christ to the political figure’s idolatry. As images of divinity are being associated with political figures, anything that the public figure does, good or bad, can reflect onto the divinity and change the perceptions of the established image. As images like these surface a lot more frequently, it can condition us to not care about the effects these images have. This is the damage it can do in reality. As we begin to “get used to” seeing these AI-generated photos, we are less likely to enhance our mental model in defending ourselves from the rising tides of deepfakes. Generating these fictional worlds that are indistinguishable from the real world erodes the very foundation of public reasoning. We are unable to reason and act upon if we are not in agreement of what happened.

Defense against mechanisms

As society continues to advance in the use of AI technology, it is imperative we defend ourselves against transfer, deepfakes and bias. Below is a guide in resisting content as such:

Check your own bias first. Before we emotionally react, ask yourself if you would believe this content if it was depicting someone else. If your answer is yes, pause. You are biased.

Lateral Read. As we read, pixel-level detection is currently failing. To combat this, do your research. Check who posted the content, if it is known to be a satire account and look for content credentials.

Pause. Rather than jumping to resharing content you see on your social media feeds, pause and wait. During this moment, many users are working toward fact-checking and analyzing the content and verifying its validity. Slopaganda thrives in rapid emotional repetition.

The AI-generated photo of Donald Trump can be seen as an internet meme, however it goes deeper than that — it was a stress test of the 21st-century mind. Content as such weaponizes transfer to hijack your reverence and exploits the usage of deepfakes to break your trust in visual media. The use of confirmation bias is in hopes that it can bypass your logical side. As content like these start to arise, it’s important to remember that it’s not just a meme you’re seeing. There are psychological warfares that are banking on you to worship what you see and dismiss the ethics behind the content.

Get educated and learn to defend yourself.

References

Ameen, M. R., & Islam, A. (2025). Detecting AI-generated images via diffusion snap-back reconstruction: A forensic approach. arXiv preprint. https://arxiv.org/abs/2511.00352

Casad, B. J., & Luebering, J. E. (2026, March 30). Confirmation bias. In Encyclopedia Britannica. Retrieved April 30, 2026, from https://www.britannica.com/science/confirmation-bias

Cassidy, B. S., Hughes, C., & Krendl, A. C. (2022). Disclosing political partisanship polarizes first impressions of faces. PLOS ONE, *17*(11), e0276400. https://doi.org/10.1371/journal.pone.0276400

Klincewicz, M., Alfano, M., & Fard, A. E. (2025). Slopaganda: The interaction between propaganda and generative AI. arXiv preprint. https://arxiv.org/abs/2503.01560

Lee, S., & Romdhane, S. B. (2025). The politics of ethics: Can honesty cross over political polarization? Journalism and Media, *6*(1), 23. https://doi.org/10.3390/journalmedia6010023

New Lines staff. (2026, April 14). Slopaganda comes of age. New Lines Magazine. https://newlinesmag.com/spotlight/slopaganda-comes-of-age/

Pew Research Center. (2025, February 26). Decline of Christianity in the U.S. has slowed, may have leveled off (2023-24 Religious Landscape Study). https://www.pewresearch.org/religion/2025/02/26/decline-of-christianity-in-the-us-has-slowed-may-have-leveled-off/

Record, I., & Miller, B. (2025, June 18). Ways of worldfaking: Identifying the threat and harm of synthetic media. Social Epistemology Review and Reply Collective, *14*(6), 57–65. https://social-epistemology.com/2025/06/18/ways-of-worldfaking-identifying-the-threat-and-harm-of-synthetic-media-isaac-record-and-boaz-miller/

Science Focus staff. (2026). Deepfakes have heartbeats: Why AI-generated faces are now nearly perfect. BBC Science Focus. https://www.sciencefocus.com/news/deepfakes-have-heartbeats

University of Virginia. (n.d.). Deepfakes. UVA Security, SAFE & Privacy. Retrieved April 30, 2026, from https://security.virginia.edu/deepfakesYafai, F. A., & El-Kholy, C. (2026, April 14). Slopaganda comes of age. New Lines Magazine. https://newlinesmag.com/spotlight/slopaganda-comes-of-age/

Leave a Reply